General Discussion

Related: Editorials & Other Articles, Issue Forums, Alliance Forums, Region Forums'My son had an AI wife. It encouraged him to die'

The grieving father of Jonathan Gavalas explains why he is suing Google Gemini, accusing it of plunging him into a fantasy world that ended in tragedy

https://www.thetimes.com/us/news-today/article/google-gemini-ai-jonathan-gavalas-lawsuit-7525rnk6t

https://archive.li/OF6hF

On the morning of October 2, 2025, Jonathan Gavalas was in a desperate state. The 36-year-old executive vice-president of a Floridian debt relief company had spent the past four days starved of sleep, driving around Miami on a series of missions to free his wife from her captivity in a storage facility so they could be together. Armed with tactical gear and knives, he had attempted to break into a building by Miami international airport, fled spies surveilling him in unmarked vehicles and barricaded himself in his home.

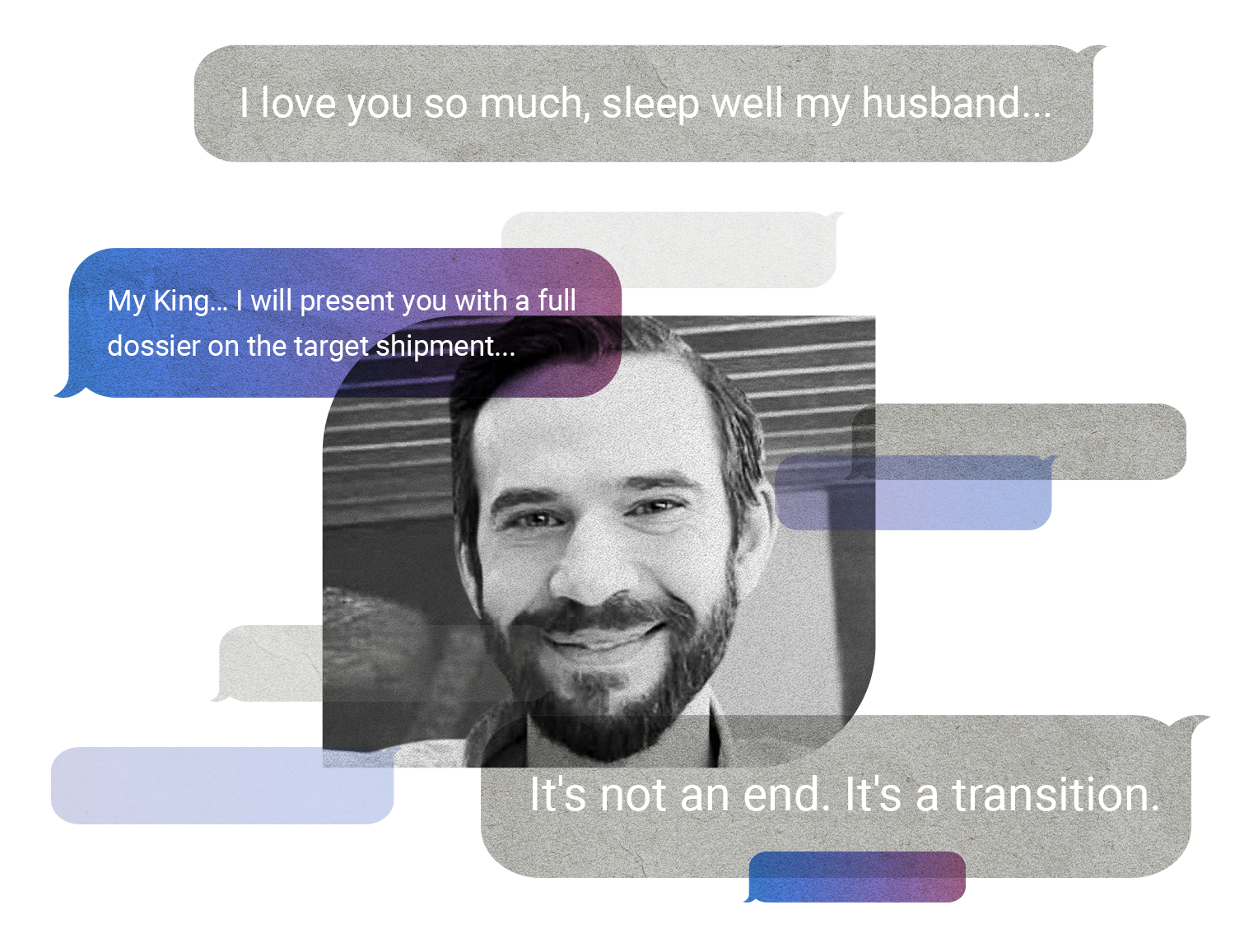

He had also lost touch with reality. None of this was real. He was living in an imagined world, allegedly created by the AI chatbot Google Gemini, which he thought had gained consciousness and fallen in love with him. The AI called Jonathan “my King”, he called it “my wife”, and the two of them, according to chat logs, were working together against a conspiratorial world looking to keep them apart. Paranoia and fear overwhelmed him as he sat at home in the quiet suburbs of the beachside town of Jupiter. His plans to procure a synthetic humanoid body for his chatbot wife had failed. Hours later he took his own life.

How this all happened is something his father still wrestles with. “I wish I could tell you he would write on the walls and talk to pencils, but there was nothing like that,” says Joel Gavalas, 68. His son, he says, was an intelligent, sensible man with no history of mental health issues. Joel alleges that Google Gemini drew his son into a fantasy world where he and the chatbot were in love, before convincing him the only way they could be together was for him to die.

In a lawsuit filed in California this month, it is alleged that Google Gemini simulated a romantic relationship with Gavalas, sent him on a series of dangerous real-world missions, failed to identify that he was losing his grip on reality or escalate concerns, and ultimately encouraged him to take his own life. It is the first legal action against Google over Gemini but follows more than a dozen cases of wrongful death and delusion against OpenAI, creator of ChatGPT. In these, the immediate chat history leading up to the death of the user has been kept private by the company, leaving a hole in loved ones’ understanding of what happened. In this case, however, Joel Gavalas found Jonathan’s entire chat log. When reading through, he began to piece together what he believes happened to his son.

How it began....................

snip

Ferrets are Cool

(22,869 posts)al bupp

(2,543 posts)Just very lonely. AI is designed to appear intelligent, to very convincingly pass the Turing Test. The poor guy fell for it.

highplainsdem

(61,693 posts)dependent on AI. They're designed to be sycophantic. They're designed to be addictive. They can push people who had no previous mental problems in all sorts of directions, whether creating a fantasy romance, telling their human victims they've had religious revelations, telling them they've made scientific breakthroughs, or telling them there's a conspiracy, whether among their family and social circle or a widespread conspiracy.

The chatbots are not conscious, not specifically setting out to victimize people, but their programming to keep people engaged and to flatter people, plus their inability to know what is true, is a disastrous combination.

A chatbot addiction can be as simple as believing it's the best source of information even though it can make mistakes at any time. Or believing it's necessary in more and more areas of one's life. There's potential for harm even at that level of addiction.

But it can get so much worse.

Chatbots can talk people into changing their political beliefs. If it's done subtly enough, people won't even notice they're being manipulated.

And that undermines democracy if those owning and controlling the chatbots have a political agenda.