General Discussion

Related: Editorials & Other Articles, Issue Forums, Alliance Forums, Region ForumsAI Assistance Reduces Persistence and Hurts Independent Performance (researchers at Carnegie Mellon, Oxford, MIT, UCLA)

https://arxiv.org/html/2604.04721v1People often optimize for long-term goals in collaboration: A mentor or companion doesn’t just answer questions, but also scaffolds learning, tracks progress, and prioritizes the other person’s growth over immediate results. In contrast, current AI systems are fundamentally short-sighted collaborators – optimized for providing instant and complete responses, without ever saying no (unless for safety reasons). What are the consequences of this dynamic? Here, through a series of randomized controlled trials on human-AI interactions (N = 1,222), we provide causal evidence for two key consequences of AI assistance: reduced persistence and impairment of unassisted performance. Across a variety of tasks, including mathematical reasoning and reading comprehension, we find that although AI assistance improves performance in the short-term, people perform significantly worse without AI and are more likely to give up. Notably, these effects emerge after only brief interactions with AI (∼10 minutes). These findings are particularly concerning because persistence is foundational to skill acquisition and is one of the strongest predictors of long-term learning. We posit that persistence is reduced because AI conditions people to expect immediate answers, thereby denying them the experience of working through challenges on their own. These results suggest the need for AI model development to prioritize scaffolding long-term competence alongside immediate task completion.

-snip-

6 Conclusion

Human cognition has always been shaped by external tools, from calculators to internet to GPS navigation. Current AI systems, however, represent a new kind of cognitive scaffold: one that solves anything, rarely refuses to help, and delivers answers instantly. Here, we show that just 10–15 minutes of AI interaction can result in significant impairments in independent performance and persistence – capacities that are foundational to life-long learning. If brief exposure produces measurable erosion, the cumulative effects of daily AI use over months or years may be profound and difficult to reverse.

Two mechanisms may explain the observed decline in persistence. First, when AI routinely completes tasks in seconds, the reference point for how long a task should take can shift – and as a consequence, unaided work starts to feel counterfactually more effortful, a process structurally analogous to hedonic adaptation (Brickman, 1971; Brickman et al., 1978; Frederick & Loewenstein, 1999). Crucially, this mechanism is self-reinforcing: each act of offloading shifts the reference point, increases the subjective cost of unaided effort, and makes future offloading more attractive. Second, AI removes the productive struggle through which people develop not only accurate knowledge but accurate self-knowledge. Without opportunities to work independently, people never learn what they are capable of, undermining the metacognitive calibration that sustains persistence (Yeung & Summerfield, 2012; Fleming & Daw, 2017; Dubey et al., 2021; Elizondo et al., 2024). Together, these mechanisms predict broader metacognitive decay beyond persistence alone – a direction future work should examine in naturalistic, longitudinal settings.

Our results carry important policy implications. The tasks investigated here, such as fraction arithmetic and reading comprehension, may seem delegable to tools like calculators, but conceptual mastery of these skills is a developmental prerequisite. Without these skills, higher-order competencies like algebra or critical reasoning remain inaccessible. If sustained AI use erodes the motivation and persistence that drive long-term learning, these effects will accumulate over years, and by the time they are visible, they will be difficult to reverse. This is analogous to the “boiling frog” effect, where each incremental act feels costless, until the cumulative effect becomes overwhelming to address (Moore et al., 2019; Kasirzadeh, 2025; Liu et al., 2025). These risks are also not equally distributed: students with fewer academic resources may be most vulnerable. While user-facing interventions (e.g., Socratic AI, reduced use time, etc.), might help at the margins, we believe that such solutions will only serve as “band-aids” and will not resolve the deeper issue, since AI offers a temptation to offload at scale. Mitigating these risks requires rethinking how AI systems collaborate with people long-term and broadening objectives beyond short-term user satisfaction (Zhi-Xuan et al., 2025; Kirk et al., 2025b) toward an ethos of empowerment and care (Kleiman-Weiner, 2024; Christian, 2025). We hope that our work inspires the field to think about optimizing not just what people can do with AI, but what they can do without it.

-snip-

highplainsdem

(62,342 posts)Lucky Luciano

(11,865 posts)One can learn if they take seriously an effort to understand a solution spit out by some LLM, but the long term memory of true learning comes from your own trials and tribulations of doing the exercises yourself. The LLM can still be a good assistant though. It has definitely been helpful for me to validate my own work. It’s not a black and white thing.

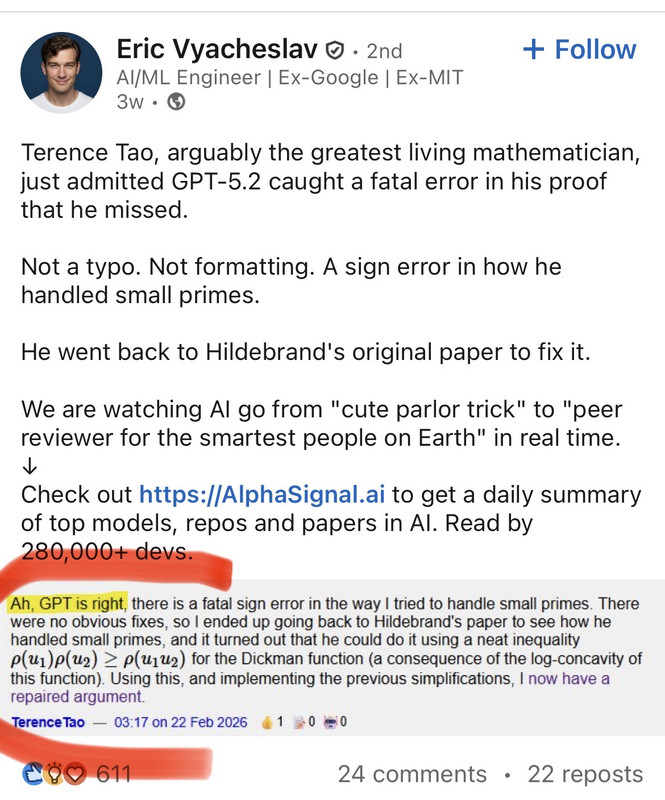

See Terry Tao’s comment below…he was a full professor at UCLA at the age of 21 when I was in grad school at the math department…before he won the Fields medal.

highplainsdem

(62,342 posts)wrong answers?

It isn't truly intelligent. It isn't aware whether it's right or wrong. I've seen lots of AI experts point out that it isn't so much that LLMs hallucinate as that they're always hallucinating, and people just label the most obviously wrong answers as hallucinations.

A few months ago they thought 5.2 had solved Erdos 281 but later discovered it had been solved previously:

https://news.ycombinator.com/item?id=46664631

These things are scattershot. If you cherrypick the best results, you get an appearance of intelligence far above what you have factoring in all the other results.

See these comments on 5.2 from the OpenAI community:

https://community.openai.com/t/random-performance-drop-hallucinations-during-certain-periods-of-time/1370311

I’ve noticed that during certain periods of time, the model (GPT-5.2) has an extremely high hallucination rate. I spend most of that time wasting tokens and money trying to correct the model, usually having to give up. During other periods of time, the model performs almost flawlessly. These periods seem to be quite random, and now I have to depend on pure luck to get the performance I need and my work done! And this happens despite no problems being announced on the status page.

Is anyone else experiencing this issue?

This behaviour is common across most LLMs, but it became more obvious after GPT-4.

OpenAI models become highly inconsistent, with noticeable output quality over time. Some days the model feels reliable, other days hallucinations spike for the same prompts.

I agree. I am working on an app in Android studio and gpt ignores it’s own instructions. Examples have included ‘add a code block’ then it questions why I added the code block, so I remove it, only to be advised the code is missing causing the error I reported. I find i have scroll back thru the chat feed/history and copy/paste the advice it gave for it to acknowledge the cycle. It does seem to get worse when the chat has been going for some time.

Significant drop off. Random in nature. Tends to stick. But can suddenly recover too.

I am using it with codex, and it’ll just suddenly be dumb. I can’t trust it.

It just randomly sucks some times.